Bridging the Diagnostic Gap of Standard DNNs

Fault DetectionDeep Neural NetworksHierarchical ClassificationExplainable AI

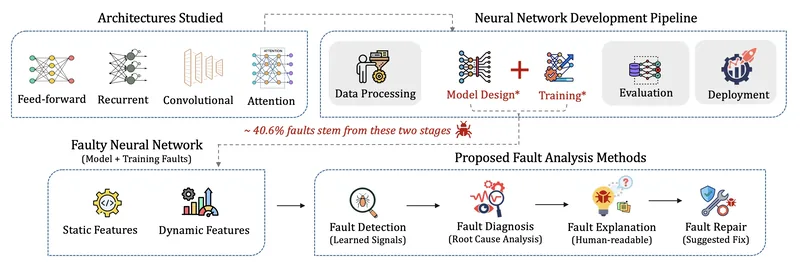

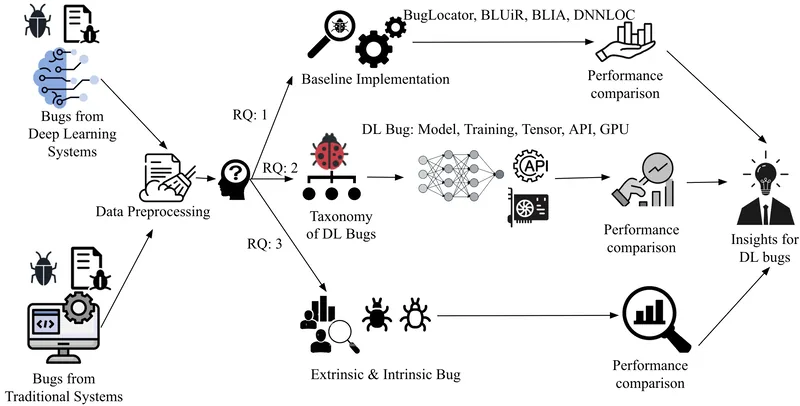

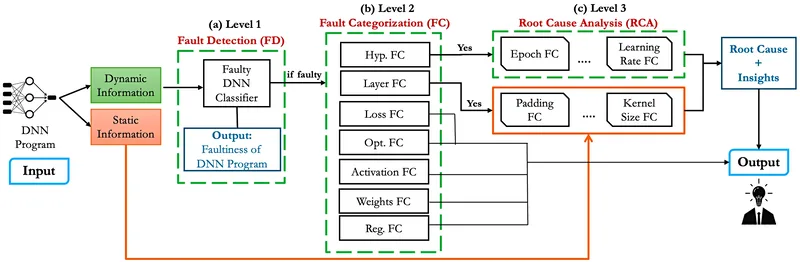

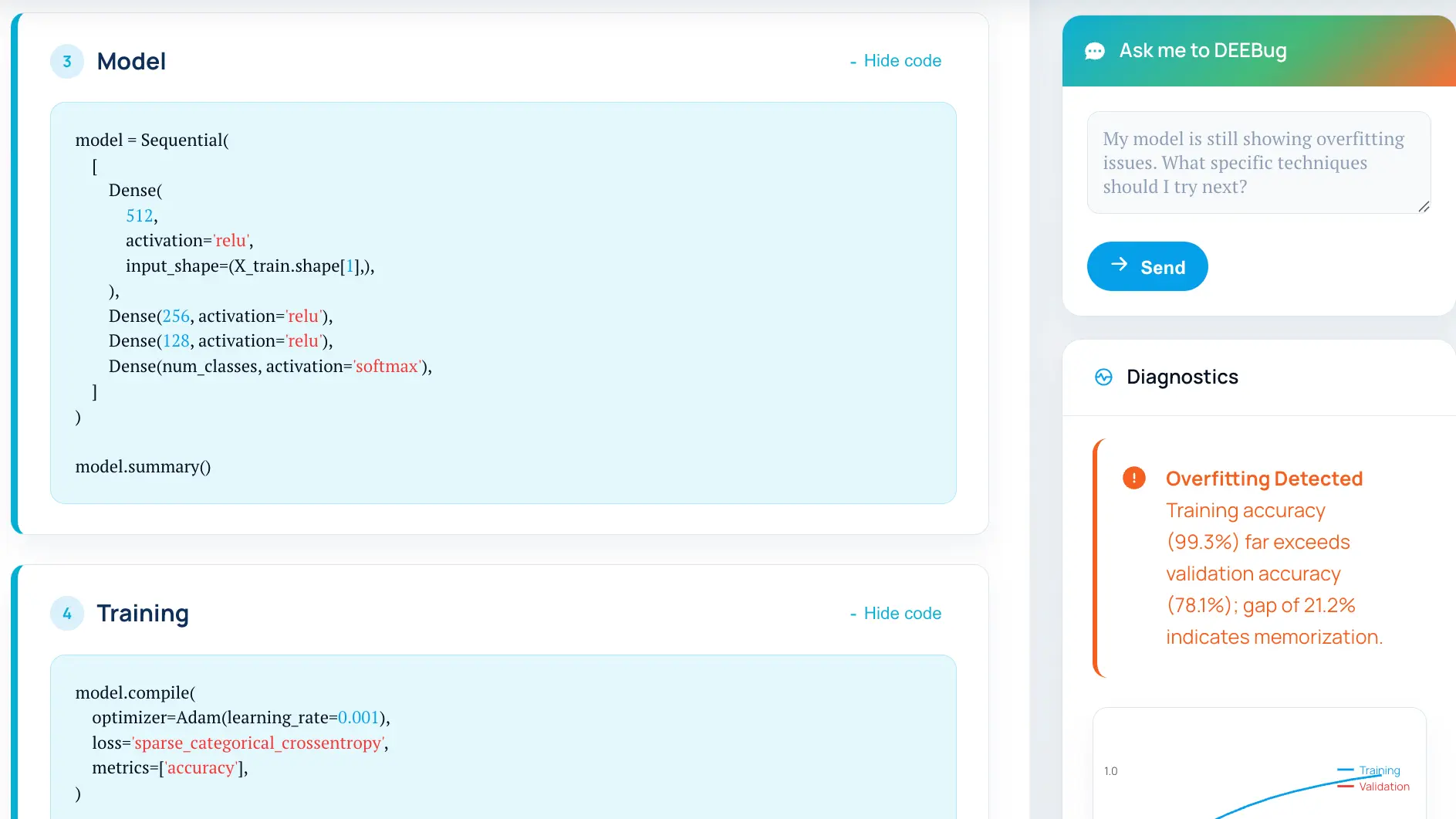

Building upon the diagnostic gap findings, this work introduces DEFault, a novel hierarchical fault diagnosis technique that integrates static and dynamic analysis to comprehensively address model and training-related faults in deep neural networks, achieving 11.54% higher performance than four state-of-the-art approaches. DEFault employs a three-stage hierarchical learning approach covering all major fault categories across Feedforward, Convolutional, and Recurrent Neural Networks using 14,000 DNN programs, making it the most comprehensive fault diagnosis solution for standard DNN architectures by providing interpretable explanations for detected faults.